Designing Pivotal Practice an AI Roleplay Experience for Manager Growth

Traditional leadership training gave managers theory but little chance to practice. I led the end-to-end design of Pivotal Practice, an AI-powered roleplay product that helped managers rehearse real workplace conversations through immersive, feedback-driven simulations. The product generated $1.5M in revenue, drove 63% skill growth, and earned an 88% client satisfaction score.

Praxis Labs

Praxis Labs is an AI-powered learning platform on a mission to make workplaces work better for everyone. Through coaching, roleplay, and skill assessment, we help people develop the critical human skills needed to succeed as leaders. Organizations use our platform to build these skills at scale and drive higher engagement and performance.

Pivotal Practice 1.0

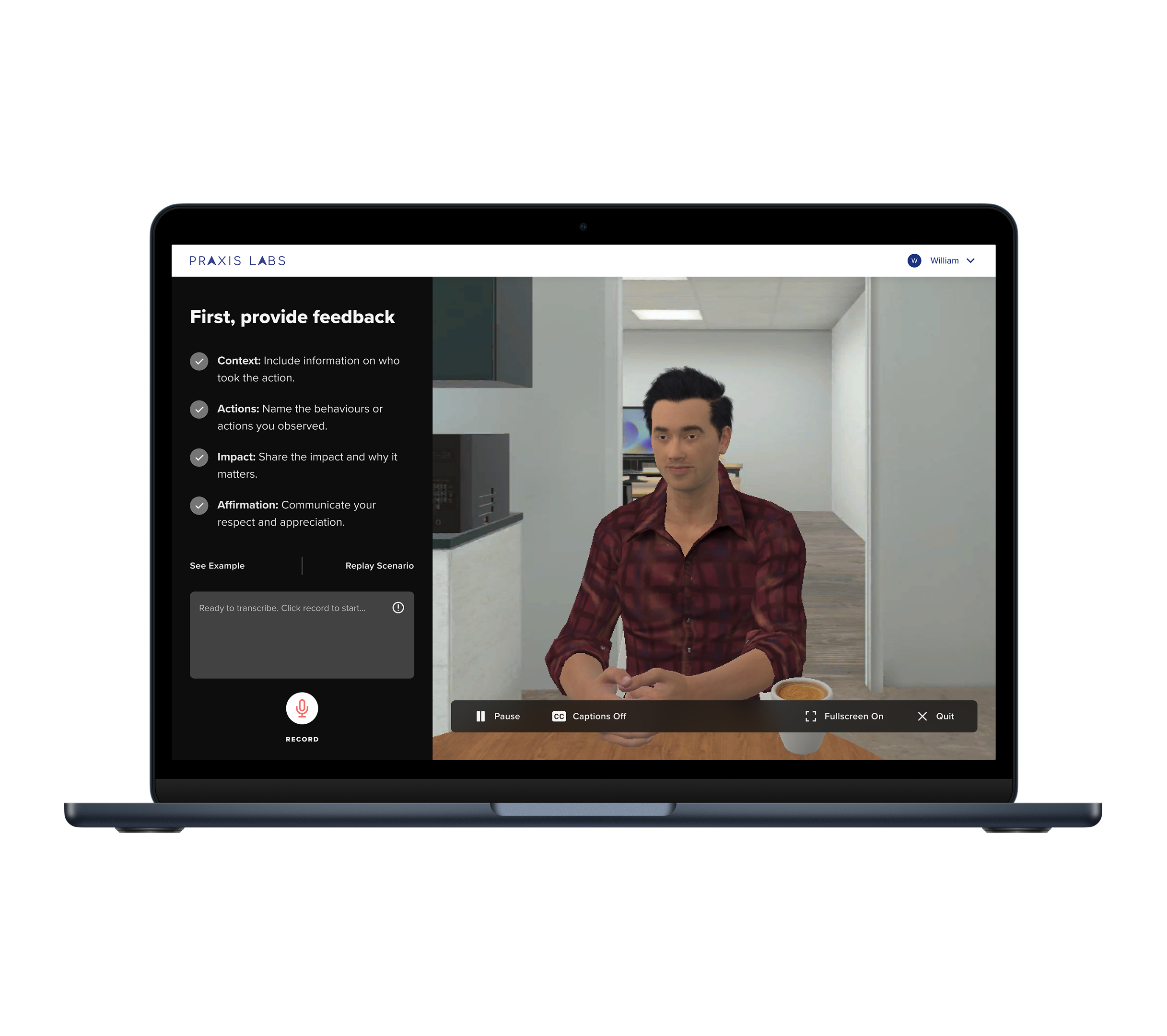

Pivotal Practice 1.0 was an earlier version of the product, built within Praxis’s web-immersive platform, as a microlearning experience for busy managers. It mostly used scripted scenarios, with learners selecting responses, speaking scripted answers, and progressing through simulation flows based on their choices.

roleAs the sole designer for Pivotal Practice, I led the end-to-end design of the experience, shaping the conversation experience, design system, learning flow, and AI interaction.

Team1 Product Owner

2 Learning Designers

2-3 Software Engineers

1 QA Engineer

ResponsibilitiesUser Research

Usability Testing

Product Design

Visual Design

Design Systems

design processDiscover

Building on 1.0 research and feedback, we ran targeted discovery with client design partners and internal audits to refine the product vision

Define

Aligned on core problem framing and success metrics; prioritized design goals for voice, coaching, and learner experience consistency

Develop

Rapid prototyping and weekly design sprints with continuous stakeholder feedback to improve the core learner experience

Deliver

Beta launched with design partner clients (Jan 2025), followed by general availability (April 2025) with a new rebranded AI-native platform + 14 scenarios

01

Discover

The Problem

Traditional leadership training often fails where it matters most, in real-life application.

Too much theory, not enough practice

Hard to scale consistently across large organizations

Limited ways to measure whether people are improving

02

Define

The Challenge

We built an immersive, scalable, and measurable solution with Pivotal Practice 1.0. The product resonated but had limits:

Clients wanted more variety and org-specific customization

Learners expected more dynamic, responsive interactions

Content creation was slow, relying on manual scripting and voice acting

Our Approach

For Practice 2.0, we reimagined the product using GenAI to:

Speed up content creation and enable org-specific customization

Make conversations more dynamic, human, and responsive

Test quickly and build a scalable, immersive learning experience that’s measurable and ROI-driven

03

Develop

Evolving the ExperiencePhase 1: Concept Validation

Tested early text and voice prototypes with learners and clients to validate memory, summaries, and just-in-time coaching flow.

Phase 2: Product Refinement

Fast 1–2 week design–build–test cycles. Usability tests with success targets:

80%+ ease of use

70%+ coaching helpfulness

70%+ memory recall

70%+ would continue using

40%+ very disappointed if removed

Phase 3: Scenario Expansion

Tested the experience across leadership challenges, expanding from feedback on communication to performance coaching and leading team change.

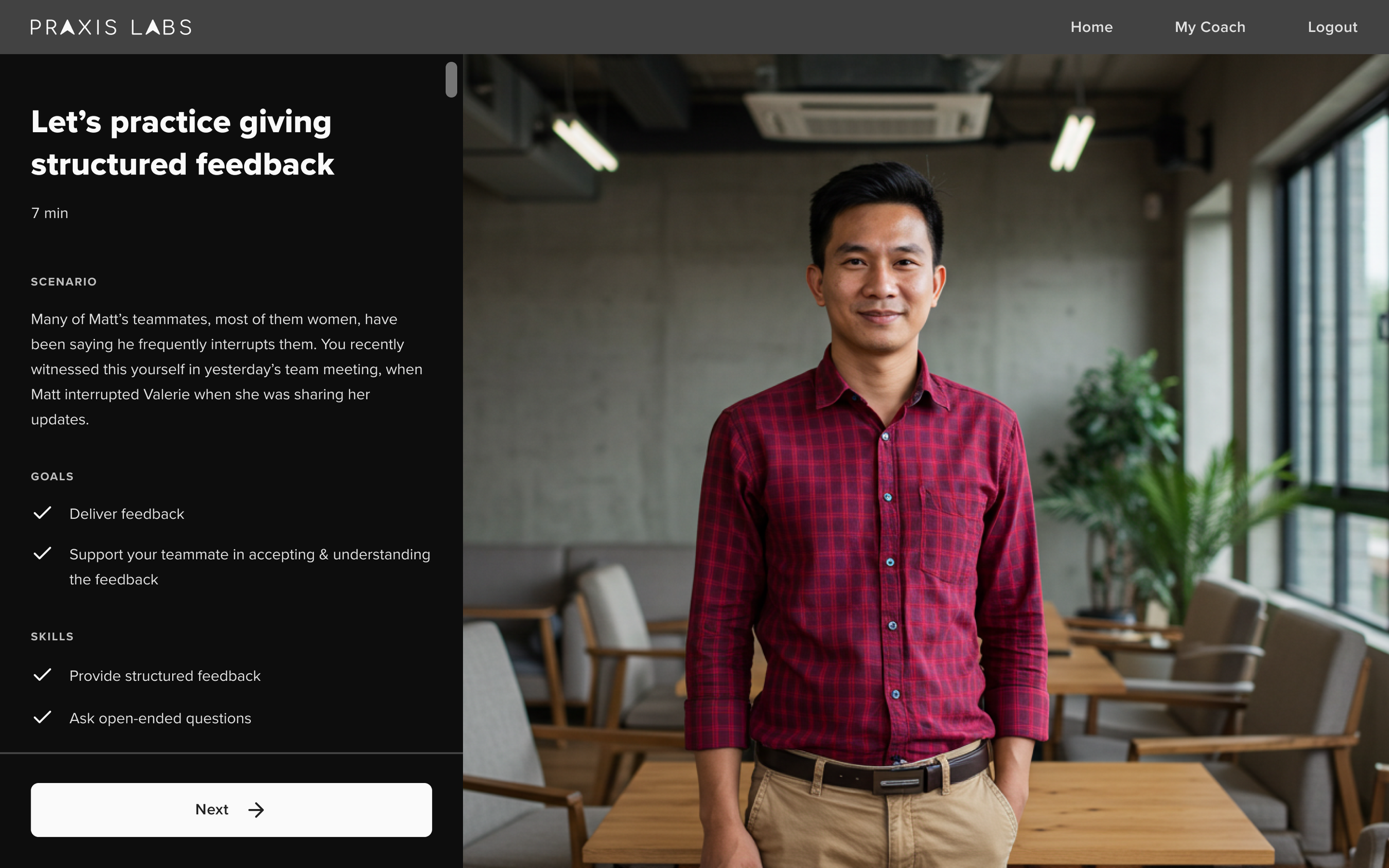

Set clear expectations so learners feel prepared

Early testing showed that our first intro page didn’t give learners enough information. They felt unclear about the conversation goal and how to know when they had finished.

I redesigned it to better prepare them without overloading them.

Outlined the scenario and coaching goal

Explained which skills they would practice and why

Used clear, simple language and reduced distractions

Learners liked the quick onboarding, felt more prepared, and performed better in the roleplay.

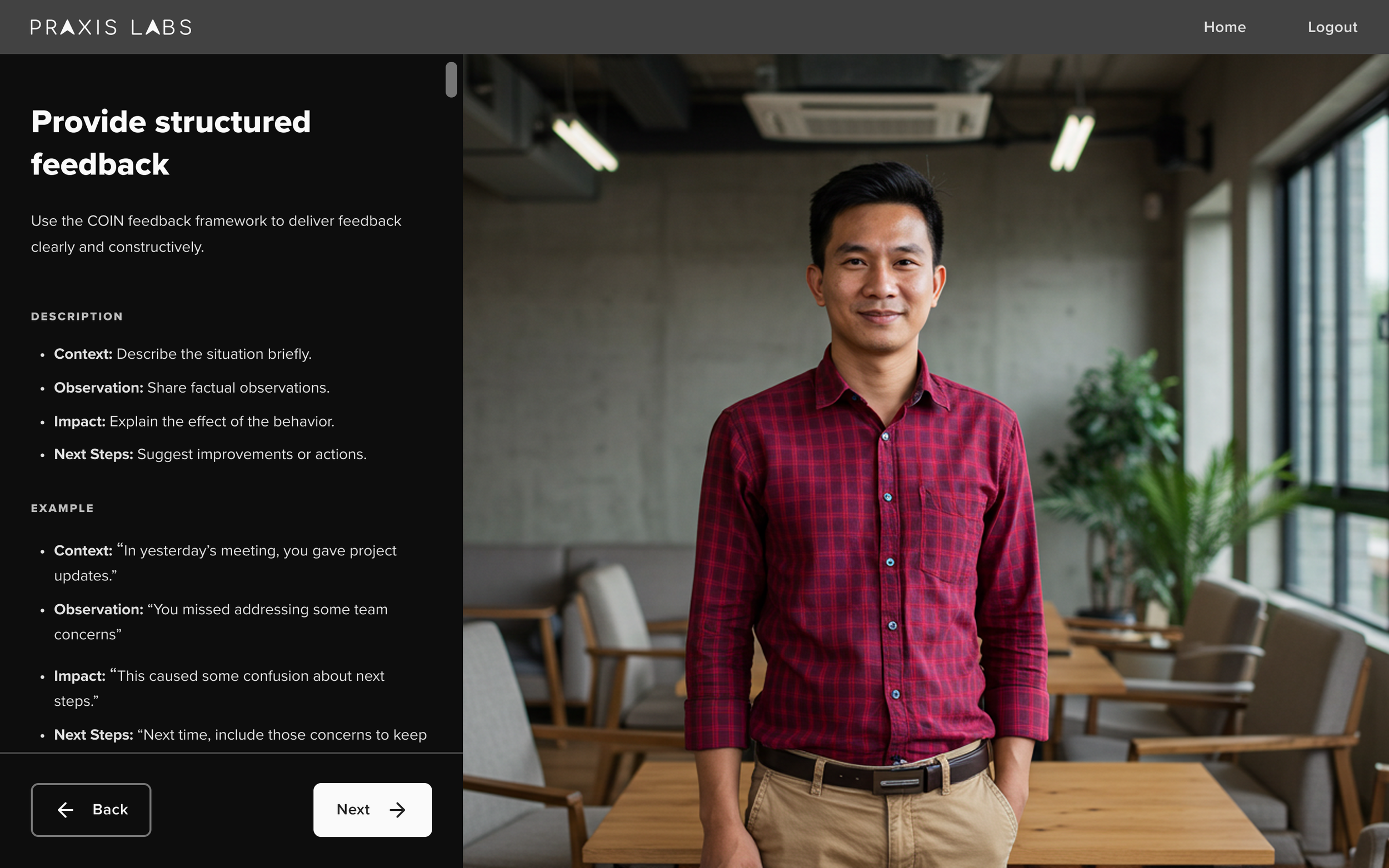

Preview key skills so learners know what to practice

Early designs let users skip this, but many who skipped felt confused. I added a Skills Preview step so learners could see 2–3 key behaviors they’d practice.

I changed the flow to require reviewing skill pages.

Provided skill purpose, plain-language description, and example

Allowed experienced learners to quickly tap through if they wanted

Prevented frustration by giving everyone a consistent preview

The page was scannable, structured, and comprehensive, helping learners quickly understand and acquire new skills without overwhelming them.

Design the roleplay like a real conversation

Early user testing revealed that many learners didn’t realize they should speak aloud or if their audio was being picked up.

I redesigned it to feel more natural and intuitive—like a real video meeting.

Simplified the layout to remove unnecessary UI elements

Had the AI character speak first to signal it was a live conversation

Added voice activity indicators and mic input feedback for clarity

Included live captions to support accessibility and reinforce what was said

In testing, learners had no more issues knowing how to start or interact in the practice after the updates. When there was an audio or connection issue, the caption was helpful for the learners.

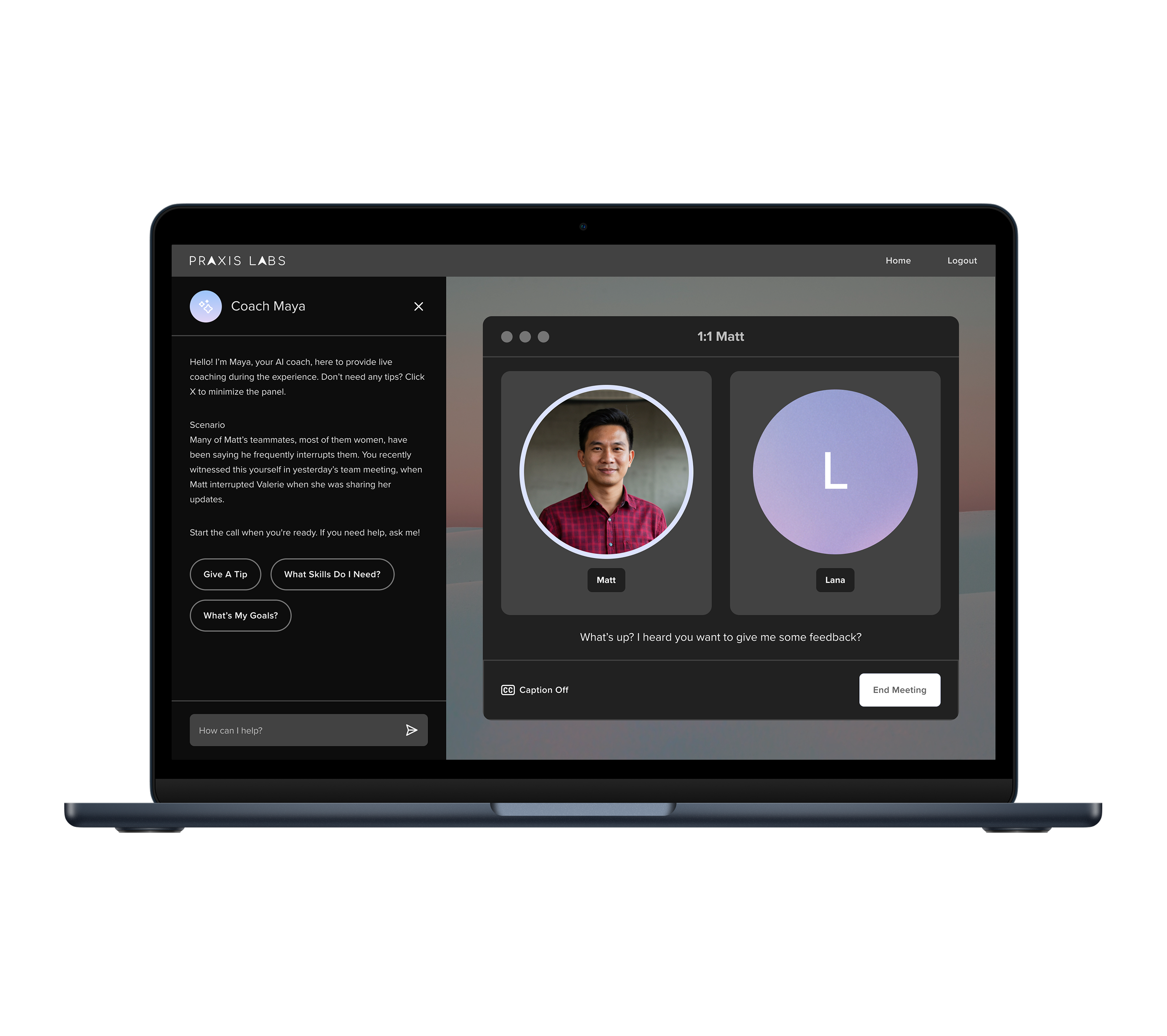

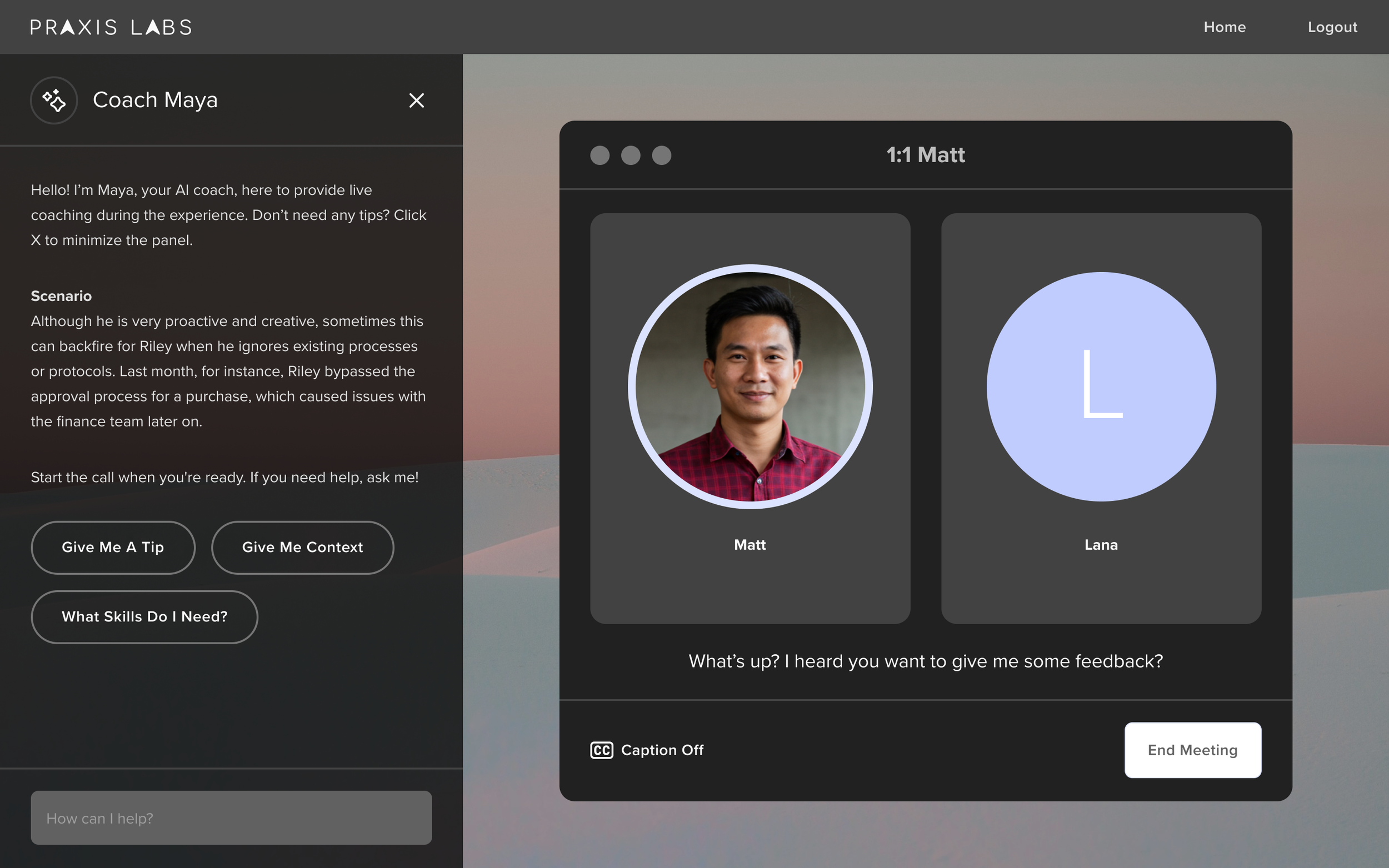

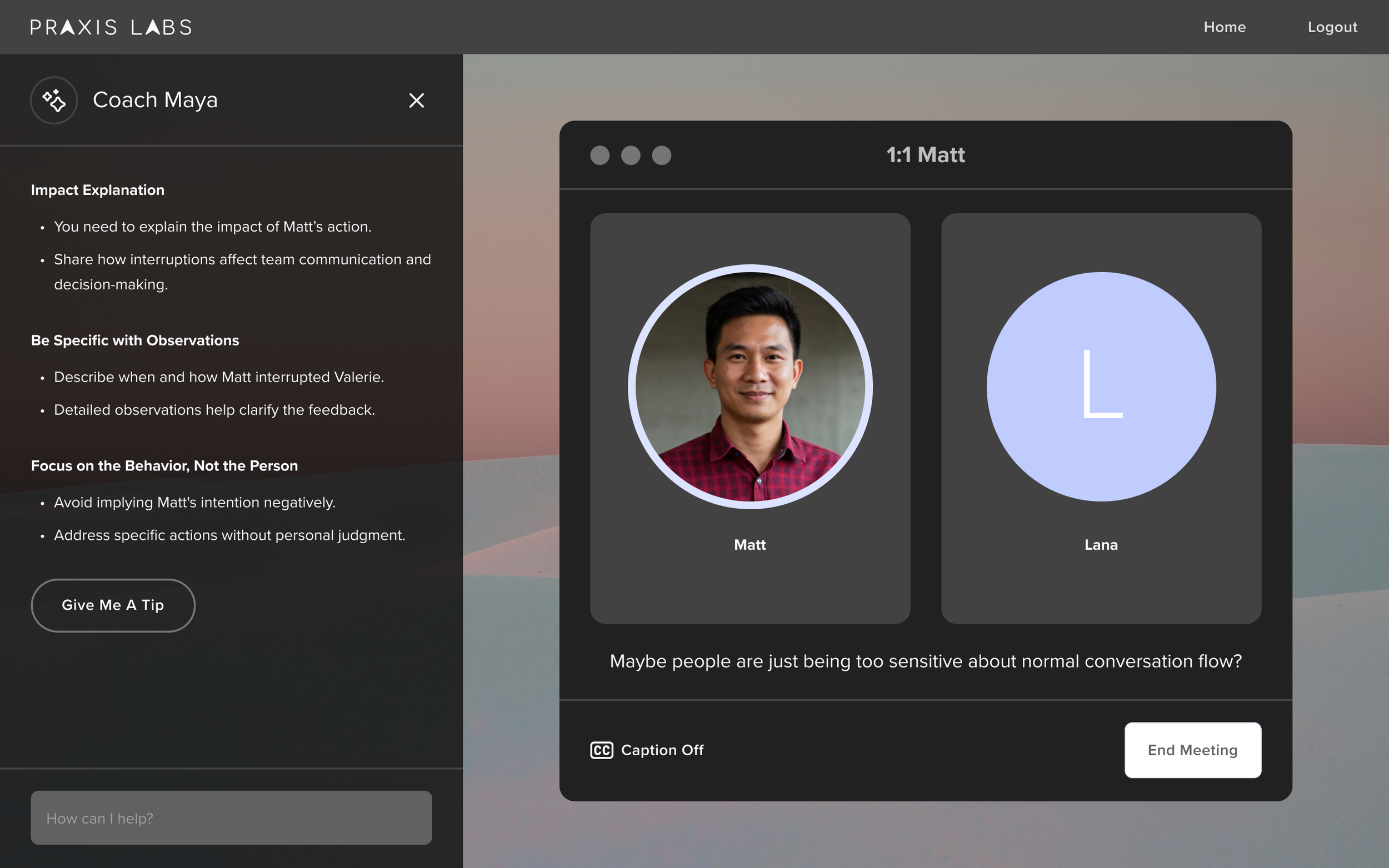

Offer in-the-moment coaching so learners don’t get stuck

Early on, learners sometimes struggled to move conversations forward or understand their goal. We introduced Maya’s real-time coaching to provide support in the moment. This became a differentiator from other tools.

Repeated the scenario and coaching goal in the side panel for reference

Delivered one automatic tip around 34 seconds to avoid disruption

Allowed learners to request additional tips on demand

Redesigned coaching as a side panel to reduce visual clutter and distractions

Multiple testing rounds helped us fine-tune the tone, timing, and overall experience.

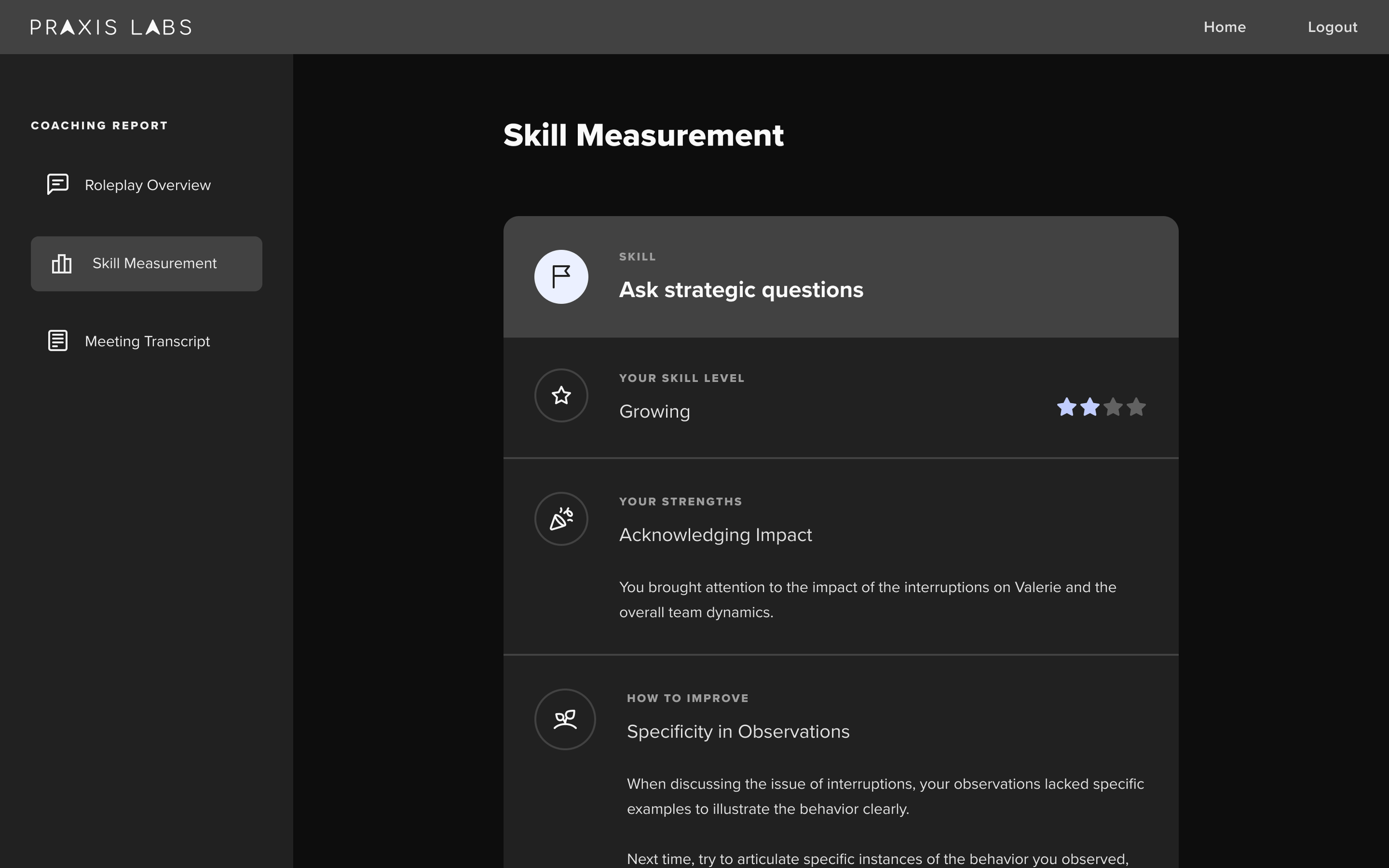

Make feedback easier to understand and trust

In early demos, clients weren’t sure how we calculated skill scores or what we measured. The original overview was too vague, and the feedback organization wasn’t as actionable. I redesigned the report to:

Show how well the learner achieved the overall goal

Group skill measurement by specific skills

Add a transcript so learners can review where to improve

Replace unclear numeric scores with intuitive star ratings

Learners valued reviewing responses and found feedback more trustworthy, increasing report engagement.

Reduce distractions so learners can focus on practice

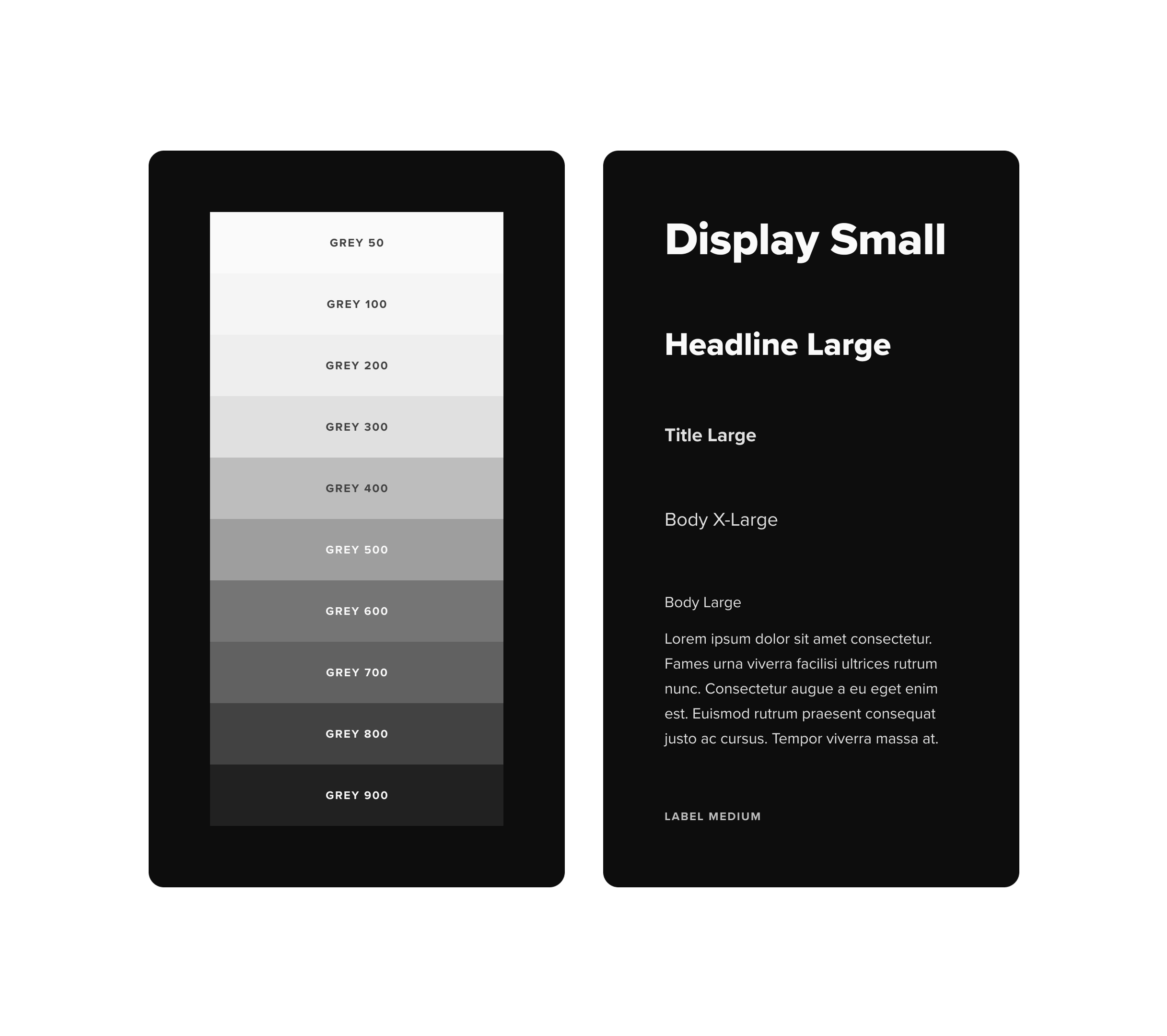

We needed a system that supported learning without distracting from it. I designed a visual design system that reduced cognitive load, aligned with our product brand, and applied accessibility best practices.

Minimized visual noise and simplified layouts to lower cognitive load

Applied consistent patterns for hierarchy, typography, spacing, and reusable components

Used a dark theme with off-white text designed to lessen eye strain and keep focus on the conversation

Ensured accessibility with left-aligned text, 16px minimum font size, high contrast, and generous spacing

The design remained clean and readable, helping learners to focus on practice and reflection. Clients gave positive feedback on the new design and rebrand.

Design believable characters to make practice more immersive

To make practice feel more immersive, I translated character backstories and scenarios into visuals and voice design that reinforced the narrative.

Established a visual prompt library to generate diverse, high-quality character images with subtle cues that reflected each scenario

Created a voice design prompt library so tone and language matched character roles, backstories, and context

Iterated continually to maintain quality and consistency as AI models evolved (ImageFX, Hume EVI3, Claude Sonnet)

These choices helped learners immediately understand the dynamics of each scenario and made conversations feel more authentic and engaging.

04

Deliver

Learner Journey Walkthrough

After multiple rounds of iteration and testing, we created a seamless experience designed for clarity, presence, and psychological safety.

The learner journey flows through five core stages:

Intro Page

Skill Pages

Meeting Simulation

In-the-Moment Coaching

Coaching Report

05

Impact

Business Outcome

Reignited Growth

Re-engaged accounts at risk of churn and unlocked stalled deals

$1.5M in New Revenue

Generated $1.5M in new and renewal deals within 6 months of beta

Enterprise Adoption

Adopted by enterprise clients including Amazon, Salesforce, Uber, Accenture, ADP, Conagra

Faster Creation

Reduced scenario creation time from 2 months to 2 weeks

Learner Outcome

88

Average satisfaction (outperformed the 64 eLearning benchmark)

89%

Believed wider adoption would positively impact team performance

86%

reported higher confidence applying skills on the job

63%

Improvement across restating, giving feedback, and validating emotions

90%

Said the experience improved their performance on the job

88%

Said they would use it to prepare for future conversations

Learner & Client Quotes

This is the best on-demand learning experience for managers I’ve ever used. The opportunity to practice challenging conversations and have real-time discussions that could go anywhere is really something special.

People ManagerOne of our biggest challenges is scaling our Learning team’s reach and impact. Many of our people leaders struggle with difficult conversations, but we can only facilitate workshops for up to ~200 leaders per year. I think this is a powerful tool to help us scale.

Fortune 500 CompanyThis has an inclusion lens built-in. When you have a performance conversation, it’s usually something else impacting it, and with the dynamic simulation, it requires people to move more quickly, incorporate compassionate listening, and be responsive, just like in real-life.

Fortune 500 CompanyAs a newer manager, I’ve already had to have some tough conversations with direct reports, and it’s been tough to know if I’m “doing it right.” This experience practicing having hard conversations with AI was really helpful, as it has allowed me to have space to “mess up”, get feedback and get more practice is a safe space.

People Manager06

Learnings

Designing an AI Product

Design flexible systems

Design for unpredictability

Test relentlessly

You create templates, guardrails, and adaptable flows, not scripts, so the product feels intentional while adapting to unpredictable user inputs.

We built in fallback behaviors and system protections to maintain learner experience even when AI responses were unexpected.

We stress-tested edge cases and adversarial conversations to refine fallback behaviors and protect learner experience when AI responses were unpredictable.

Designing with AI

Experiment constantly

Stay current

Work with AI as a creative partner

I use AI across writing, research, visuals, prototyping, and testing to explore where GenAI excels and where it struggles.

I subscribe to newsletters from UX and education leaders who track AI advancements to continuously improve my approach.

AI helps me move faster, unstick design problems, and explore new ideas. I see AI as both collaborator and co-creator in my design process.

AI Team Collaboration

Create fast feedback loops

Embrace ambiguity and experimentation

Build rituals to stay aligned

We organized as a GenAI Tiger Team using a Build + Recon model. The Build team prototyped weekly; the Recon team gathered external feedback bi-weekly to refine priorities.

Traditional roles blurred, designers, PMs, and learning scientists collaborated on prompt design and prototyping. We defined “good enough” as “not obviously wrong” to ship quickly and keep momentum.

We held daily check-ins, weekly prioritization meetings, and cross-team collaboration sessions. During early development, we ran Tiger Feast, a weekly review of user feedback and test videos to guide iteration.